Building Empathetic AI

Recently, I presented some work on - Unlocking Generative AI in Digital Health: Empathy, Outcomes, and Risk - for the business leader track at ODSC West 2023, a large Data Science conference. The next day, the conference volunteers told me that they’d had to bring an additional 40 chairs into the room to seat everyone who came to the talk! So, I wanted to share one portion of that talk here.

What is Empathy?

Empathy involves conveying that you can both understand and share the emotions of another person. One scientific study characterized the crucial ingredients of empathy as caring, kindness, and concern.

We seem to be amidst an epidemic of loneliness, which is associated with poor health. And yet, the truest loneliness stems not from actually being alone, but from the feeling that you are not deeply seen and understood.

Thus, empathy may be the antidote.

Empathy is sometimes also discussed through the metaphor of “emotion resonance.” Maybe you can remember the last time you attended a live concert, and perhaps you experienced the visceral feeling of the sound waves absorbing into your own body. This metaphor of empathy as emotional resonance suggests a similar phenomenon might be at work in the subtle perception of others’ emotions. As a scientist, I can’t yet explain how such a process would work, although as a therapist, it does align with my felt experience, sitting in a room to hold a space for a patient’s distress.

What does it mean for AI to Express Empathy?

Can we really expect machines to express empathy? And, why does it matter?

Imagine that, in the not-so-distant future, our cars, homes, and apps will likely be utilizing Generative AI to interact with us on a daily basis. As technology permeates every corner of our lives, the quality of these interactions may very well contribute to our day-to-day mental health. We have learned the hard way that our interactions with social media matter more than we thought for our mental health. The current round of the technological revolution will be driven by AI and it will require much more human-AI-interaction. Perhaps it pays to design these interactions with care.

I’m not attempting to assert that AI is sentient or actually has feelings. Rather, I’m interested in a more straight-forward question of whether a Large Language Model (LLM) - such as ChatGPT - can communicate in a way that humans experience as empathetic. As a Data Scientist & Psychologist, I am frankly intrigued by the challenge of building the tool, and what it can teach us about empathy. As a researcher, I would like to understand how empathy-infused AI can help people live healthier lives.

Experiments in AI Empathy

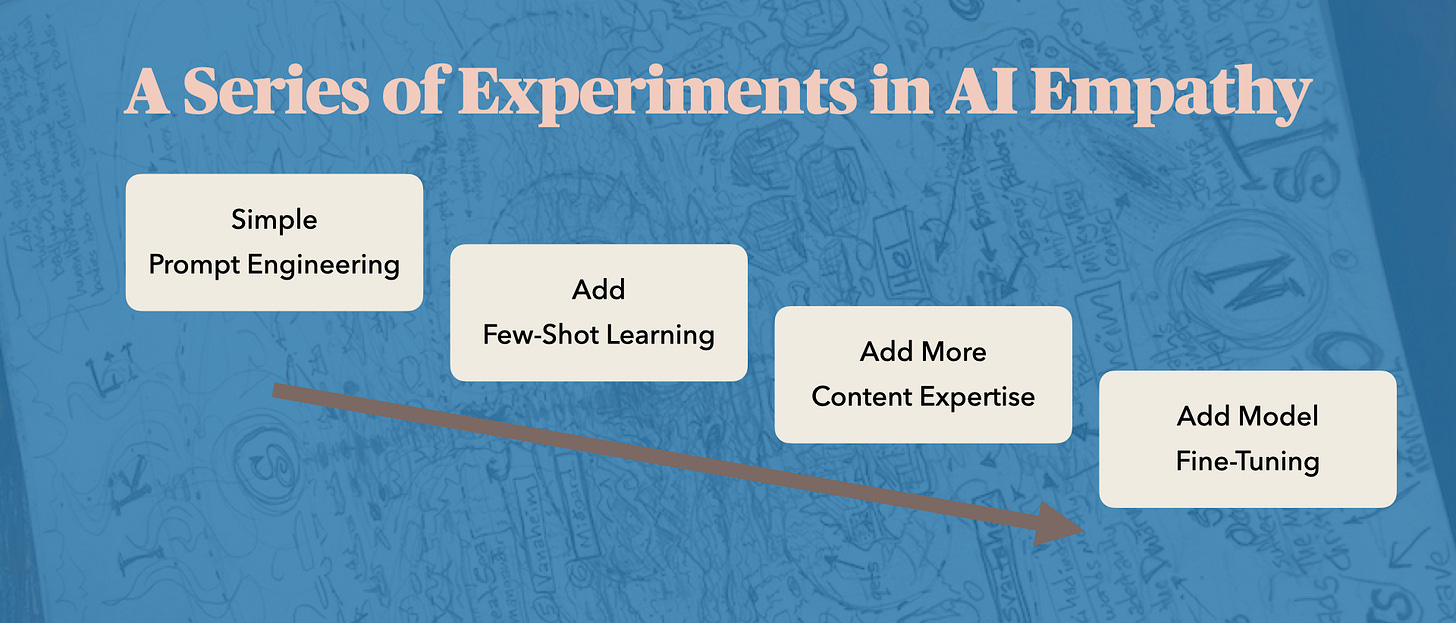

To tackle the challenge of coaxing AI to express empathy, I ran a series of data science experiments using the OpenAI API and a few of their LLMs, including ChatGPT 3.5. Here is a conceptual overview of how this series of experiments evolves:

Conceptual Overview:

Simple Prompt Engineering

Add Few-Shot Learning

Add More Content Expertise to the Prompt

Add Model Fine-Tuning (Plus the best Prompt)

Building a Proof of Concept:

For this experiment, imagine the following: I am a therapist, and I have an app that my clients use between sessions to practice skills we are working on. I also want my app to be able to do “light conversational check-ins” with clients, and help record a richer context of what they’ve experienced during the week, to inform the next session. The dialogue begins:

AI Coach: “Hi Julia! I wanted to check in. How are you doing today?”

Client: “I'm frustrated because my puppy peed all over my new rug and ruined it.”

I have written a python program that takes this user input and runs it through several different prompts, and then I qualitatively compare the output, judging it first on face validity based on my experience as a therapist. For this example, I have used OpenAI’s LLM called GPT 3.5 turbo.

I’m just trying to build an initial proof of concept (POC), so as the first step, I will evaluate the model’s performance qualitatively as a psychotherapist. Next, I casually ask some friends and other therapists I know to use my POC and give me their feedback. Eventually, I could run an actual research study to obtain a more quantitative perspective, but right now, I’m exploring what approaches work best and building my intuition for this problem.

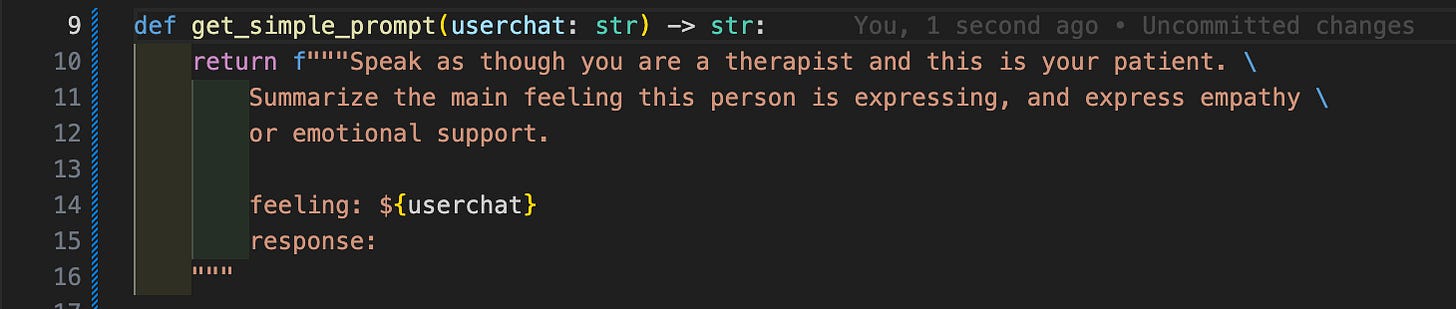

Prompt Engineering

Some of the best practices of simple prompt engineering include assigning the LLM a role and providing specific instructions for the desired behavior. Below, you’ll see my initial prompt and ChatGPT’s response:

AI Coach: “I can understand why you would feel frustrated in this situation. It can be really disappointing when something you value, like your new rug, gets ruined. It's especially frustrating when it's caused by something that is out of your control, like your puppy's behavior. It's completely normal to feel this way. Is there anything else that you would like to talk about or any other feelings that are coming up for you?”

On the one hand, ChatGPT does correctly understand the emotion and even articulates the triggers behind the emotion. However, the response is very wordy. There’s a bit of hallucination happening as ChatGPT ponders that the client’s frustration is being out of control and that a puppy’s behavior would naturally lead to this feeling. Arguably, a dog owner does have more control; he or she can send the puppy to behavioral school. ChatGPT’s out-of-the-box response seems to frequently incorporate these dashes of confidently written fiction.

Finally, ChatGPT employs several therapist-like techniques, such as “normalization” and asking an open-ended question to invite more conversation. Viewing the response holistically, it feels like I reached my hand into a grab-bag of therapeutic techniques, pulled several out, and glued them together into a paragraph. The grammar may be solid, but the experience is clunky. The response feels disproportionately concerned in a way that comes across as a little “Uncanny Valley."

2. Few-Shot Learning

Few-shot learning is a simple way to improve LLM performance on a specific task by adding a few (e.g., 1-5) explicit examples after your prompt. In my case, this results in the following improvement in ChatGPT’s response to the same client comment:

AI Coach: “I can understand why you would feel frustrated in that situation. It's disappointing when something you care about gets damaged. Is there anything you've tried to address the issue with your puppy's behavior?”

In my opinion, this response is substantially better, because it is more proportionate. However, the final sentence veers off, revealing that ChatGPT is not ready to replace a real therapist just yet. Like that cliche partner in an article about marital communication, I want to chide ChatGPT not to jump to “fix it mode” right away.

3. Content Expertise

Content knowledge remains crucial differentiator. Content knowledge is the key to building a great model before your company has huge amounts of data. It is the key to interpretation and giving users “actionable insights.” It is the key to collecting the right data in the first place, which Andrew Ng himself now says will determine the winners of this next phase of the AI arms race (see this article in the MIT Sloan School of Management.)

When we add more specific content knowledge to the prompt - i.e., the kind of instruction you get if you immerse yourself in therapy textbooks - you get the following:

AI Coach: “It sounds like you're feeling really frustrated right now because your puppy peed all over your new rug and ruined it. It must be really disappointing to have something you just got ruined so quickly.”

From my perspective, this is the best response yet. It’s an accurate yet concise summary. It captures the emotion.

As an overarching critique that I keep seeing no matter how I word my prompts, ChatGPT has an annoying tendency to “overstate” emotion, using qualifiers like “really” too often. Overstating emotion causes the client to shut down instead of open up. For example, a client might respond, “I mean, I just felt a little bit frustrated, but it’s no big deal.” When you overstate emotion, you achieve the opposite result of what you intended. I tried explicitly asking ChatGPT not to overstate the emotion, but I continued to see that tendency.

4. Parameter Efficient Fine-Tuning

Sometimes the best way to teach someone is to “show” instead of “tell.” Similarly, the best strategy for getting a specific tone, style, or unique brand voice from an LLM may be to use a technique called parameter-efficient fine-tuning. Moreover, fine-tuning allows you to use shorter prompts without examples, which reduces the cost and decreases the latency of the response.

I wrote a python script to fine-tune the model (ChatGPT 3.5 turbo) using a small proprietary dataset that I built myself. Since the number of new training examples was quite small, I was curious see whether the differences between fine-tuning and few-shot learning would be evident. Here is the response of my fine-tuned model:

AI Coach: “That sounds so frustrating! You had just gotten a new rug, and your puppy ruined it right away by peeing on it.”

Do you think that this response is better than the prior one? I’m not entirely sure myself, but it does seem to have successfully created a different voice. So, I find it encouraging to see that PEFT might be used to tune your AI to build unique branding, given the right additional data.

One more Fine-Tuned Example

Wanting to better evaluate the fine-tuned model’s performance, I compared the two best models: the prompt informed by content-expertise in the original ChatGPT 3.5-turbo model versus in the new fine-tuned version of that model.

Here’s a new example:

Client: I'm feeling overwhelmed this morning and tired. There's always so much to do for the kids to get them to school on time and get myself ready for work.

Base Model w/ Best Prompt (OpenAI’s GPT 3.5):

“It sounds like you have a lot on your plate this morning, between getting your children to school and getting yourself ready for work. It's no wonder you're feeling overwhelmed and tired.”

Fine-Tuned Empathy Model:

“You have a lot of things to do in the mornings and it's hard to get to it all. Often times you don't even get enough rest.”

I was truly surprised and positively impressed by this example! The fine-tuned model goes beyond paraphrasing what the client has already said, and provides an appropriate therapeutic direction. It nudges the user to reflect more deeply into whether they are getting enough rest. This is the kind of subtle guidance that I would expect from a real therapist.

One caveat was that the LLM parameters (temperature and max_tokens) had a major impact on the performance, and without more careful tuning of this model, grammatical errors sometimes emerged.

Conclusions

I achieved the best AI Empathy through a combination of:

Prompt Engineering using deep content knowledge

Parameter Efficient Fine-Tuning

Together, these techniques result in a language model that does a surprisingly good job of providing an empathetic reflection, and can even begin to offer some helpful (even therapeutic) questions at times. Moreover, this POC convinced me that using proprietary data is the secret to building a Generative AI model that will convey your unique brand voice.

Both of these techniques reduced the tendencies of ChatGPT 3.5 to be overly chatty, hallucinate, overstate emotions, and misdirect the conversation with ineffective open-ended questions. However, I continued to see that performance could be quite variable, depending on the model parameter settings.

An important future step would be to pilot out an experiment with a group of people, rather than evaluate everything in the qualitative way that I’ve utilized here. Nonetheless, I think these examples are quite illustrative of what’s possible and how to get there.

How can I get results like these?

You could hire a psychologist and an AI/ML engineer and have them work closely together, but it would likely take 3 times as long. You just can’t “noodle around” as quickly. The psychologist needs to understand the nature of object oriented programming and the capabilities of generative AI well enough to map or “translate” their knowledge into an abstract computer-science framework. The engineer needs to understand the nuances of the psychological approach and intention well enough to help design the architecture and evaluate the performance. I believe the future requires investing in training people who have both relevant content knowledge and AI/ML engineering skills. Soft and hard skills matter - in combination.

Reflecting on this entire experiment, I feel encouraged that perhaps our future interactions with technology could involve less frustration and fewer broken promises. We may indeed be able to build more healing interactions - should we design with the awareness that AI isn’t just about math and engineering, it’s also fundamentally about the psychological experience of the human using the AI.

To get results like these, contact me for consulting services over LI -https://www.linkedin.com/in/kirstinaschbacher/

Or find me at my website: www.healthandwellnessconsulting.xyz